In a significant development for the open-source AI community, Chinese AI companies DeepSeek and Alibaba quietly released major model updates within hours of each other, both demonstrating impressive capabilities that rival top commercial models from OpenAI and Anthropic.

DeepSeek Releases V3-0324 with Superior Coding Abilities

DeepSeek has released a new version of its V3 model, labeled V3-0324, on Hugging Face without any formal announcement or media fanfare. According to community testing, the model appears to be a significant upgrade despite being classified as a “minor version update.”

The V3-0324 model features:

- 671 billion total parameters using a Mixture of Experts (MoE) architecture, with 37 billion activated parameters

- Coding capabilities that match or even exceed Claude 3.7 Sonnet in certain scenarios

- MIT license, making it more commercially friendly than previous versions

- Innovative load-balancing strategies to improve MoE efficiency

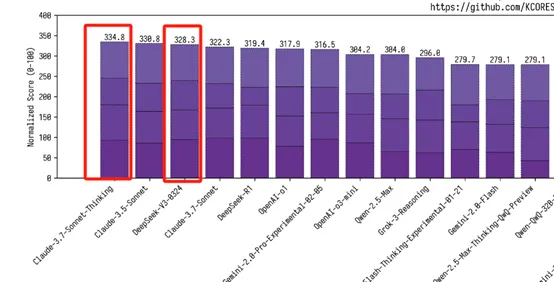

Community testing has shown the model’s coding ability achieves a score of 328.3 on the kcores-llm-arena benchmark, surpassing the standard version of Claude 3.7 Sonnet (322.3) and approaching the chain-of-thought version (334.8).

“This is insane,” wrote one developer on social media. “The new DeepSeek V3 update is way bigger than expected. I just built a ‘Soundwave Visualizer’ game in one shot with incredible results. No doubt, it’s easily the most powerful fully free AI available right now.”

Users have particularly praised the model’s ability to generate complete, functional frontend code with a single prompt. One user demonstrated how the model could create an entire landing page with just one instruction, claiming it outperforms OpenAI’s o1-pro and GPT-4.5 for frontend development despite being completely free.

Alibaba Launches Qwen2.5-VL-32B for Local Deployment

Coinciding with DeepSeek’s release, Alibaba’s AI team unveiled Qwen2.5-VL-32B-Instruct, a new multimodal model optimized for visual language tasks and local deployment.

This 32B parameter model joins Alibaba’s existing Qwen2.5-VL family (which includes 3B, 7B, and 72B versions) and features:

- Enhanced mathematical reasoning capabilities

- Improved responses aligned with human preferences

- Superior image analysis and visual reasoning

- Strong performance on text tasks comparable to other leading models in its size class

“72B too big for VLM? 7B not strong enough? Then you should use our 32B model, Qwen2.5-VL-32B-Instruct!” Alibaba’s Qwen team announced on social media. “This time, we further optimize this VLM with reinforcement learning and have found significant improvements in human preference and also mathematical reasoning.”

Benchmark comparisons show Qwen2.5-VL-32B outperforming similarly sized models like Mistral-Small-3.1-24B and Gemma-3-27B-IT, and even surpassing Qwen’s own 72B model on some metrics.

The model is already available for testing on Qwen Chat and has been added to the MLX Community for local Apple Silicon deployment.

Open Source Rising

The nearly simultaneous releases from these Chinese AI companies have sparked discussion about the rapid advancement of open-source AI models. On Hacker News, users commented that “open source will win” and that “Sam Altman was wrong” about the viability of open-source AI models competing with commercial offerings.

Industry observers have noted the curious timing of these releases, as DeepSeek and Qwen have repeatedly launched new models within hours of each other, leading some to jokingly speculate about coordination between the Hangzhou-based companies.

Both models are now available for developers to test and implement in their applications, representing significant steps forward in making powerful AI capabilities accessible and commercially viable through open-source licensing.

Leave a Reply